AiGanak-SLM-1B

Secure, Cost-Efficient Enterprise Intelligence at the Edge

A proprietary 1B-parameter Small Language Model optimized for 128k context windows

The Mission:

To provide a 1B-parameter model with a 128k context window that outperforms generic LLMs in structured data and privacy-heavy environments.

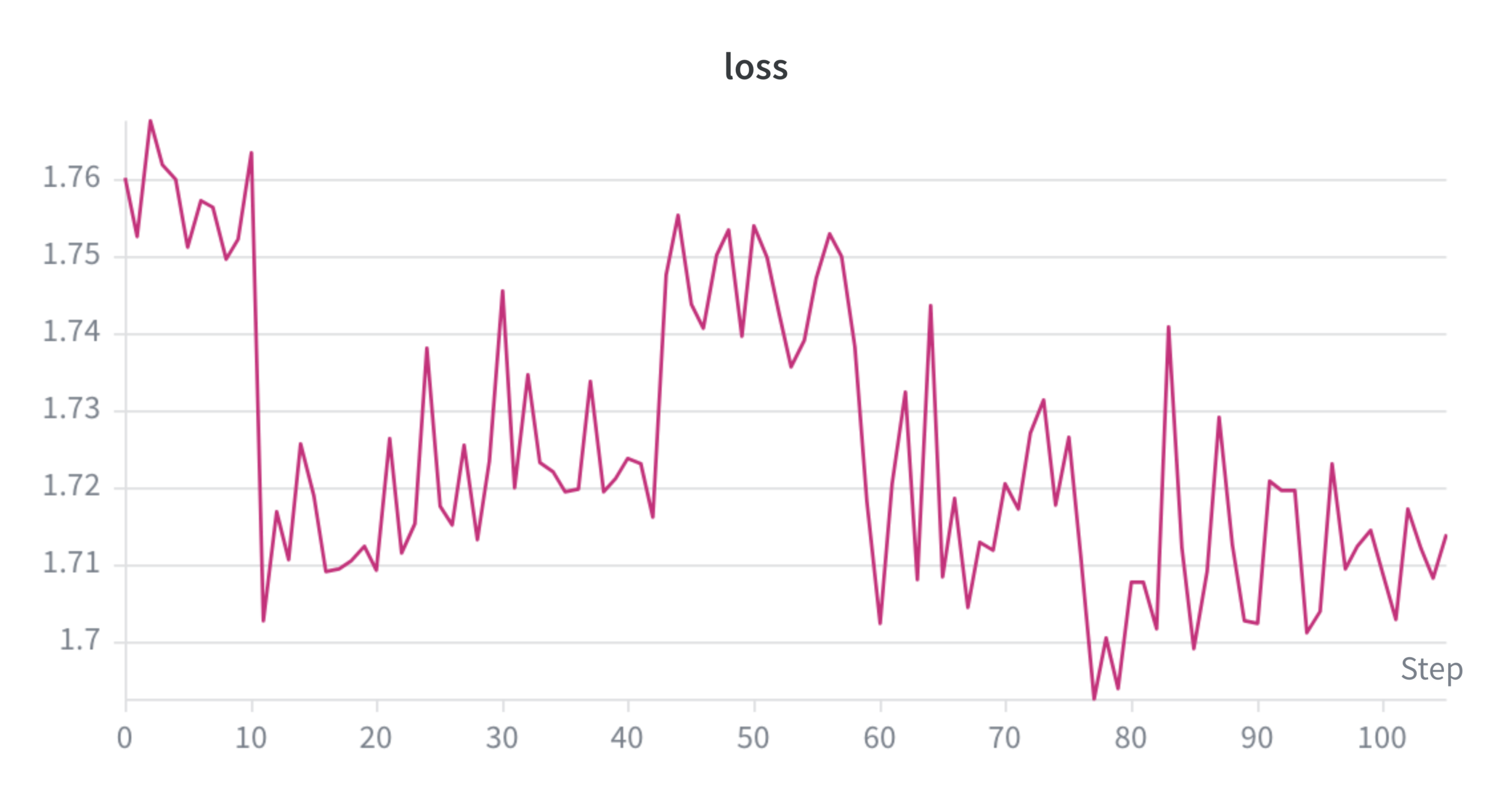

Current Convergence State:

Phase 2 (Optimization)AiGanak-SLM-1B has successfully reached Phase 2 Convergence, marking a critical shift from foundational training to high-performance logic optimization. The model is currently optimized for a 128k context window, a massive scale that allows for the secure processing of entire document libraries or complex product manuals in a single pass. Development is now focused on refining structural logic and tabular reasoning to ensure the model can handle complex data environments while remaining fully executable on-premise. This transition ensures that the model not only eliminates external "Token Taxes" but also guarantees 100% data sovereignty for enterprise-grade applications.