AiGanak-SLM-1B

Secure, Cost-Efficient Enterprise Intelligence at the Edge

A proprietary 1B-parameter Small Language Model optimized for 128k context windows

Last updated: May 2026. By: Akshay Bankar

The Mission:

To provide a 1B-parameter model with a 128k context window that outperforms generic LLMs in structured data and privacy-heavy environments.

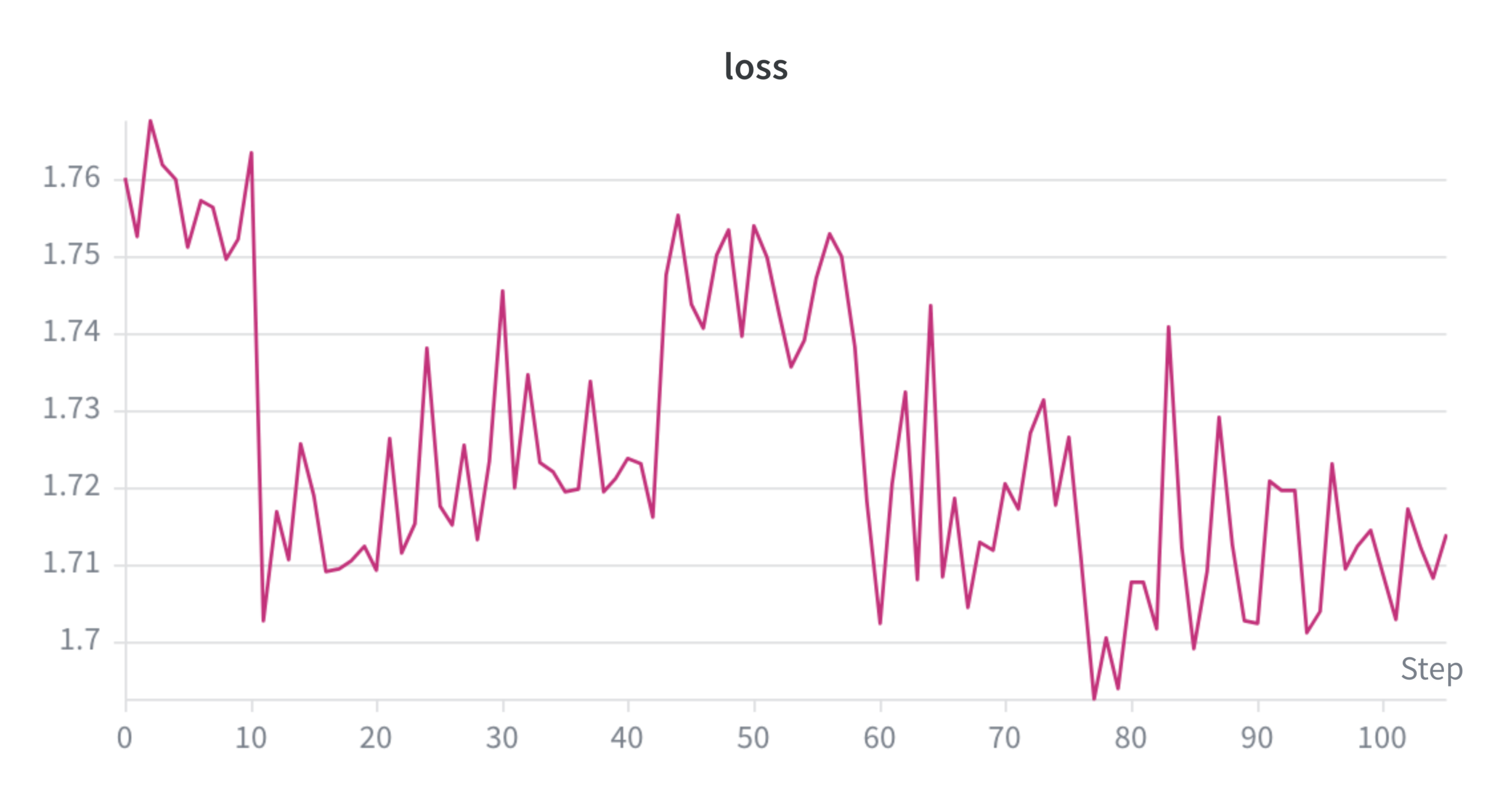

AiGanak-SLM-1B has successfully reached Phase 2 Convergence, marking a critical shift from foundational training to high-performance logic optimization. The model is currently optimized for a 128k context window, a massive scale that allows for the secure processing of entire document libraries or complex product manuals in a single pass. Development is now focused on refining structural logic and tabular reasoning to ensure the model can handle complex data environments while remaining fully executable on-premise. This transition ensures that the model not only eliminates external "Token Taxes" but also guarantees 100% data sovereignty for enterprise-grade applications.

architecture

A defining characteristic of this architecture is its massive 128k context window, which allows the model to ingest and maintain coherence over large volumes of information, such as entire technical manuals or complex legal datasets, in a single pass. This is achieved through advanced attention mechanisms and memory-efficient scaling techniques that prevent the quadratic computational costs usually associated with large context lengths. This structural choice ensures that the model remains "aware" of long-range dependencies, making it ideal for deep Retrieval-Augmented Generation (RAG) systems.

The training and optimization pipeline is currently tailored for on-premise execution, meaning the architecture is designed to run efficiently on local, consumer-grade, or private enterprise hardware. This eliminates the need for expensive, high-bandwidth connections to external cloud clusters. The architecture is currently being optimized to resolve tabular reasoning hurdles, ensuring that the model can interpret and generate structured data with the precision of a much larger LLM while maintaining a lean operational profile.